Silvia Montaña-Niño, The University of Melbourne and T.J. Thomson, RMIT University

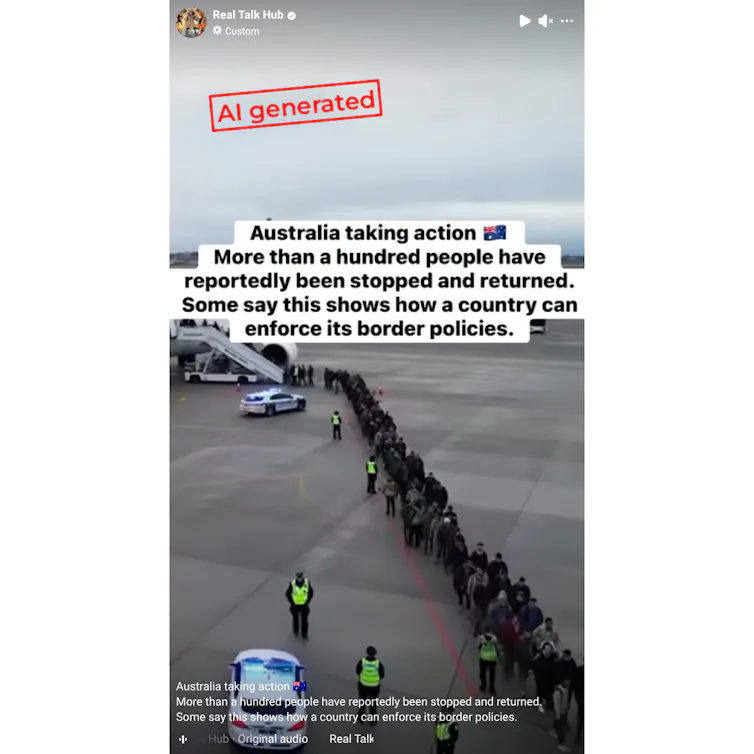

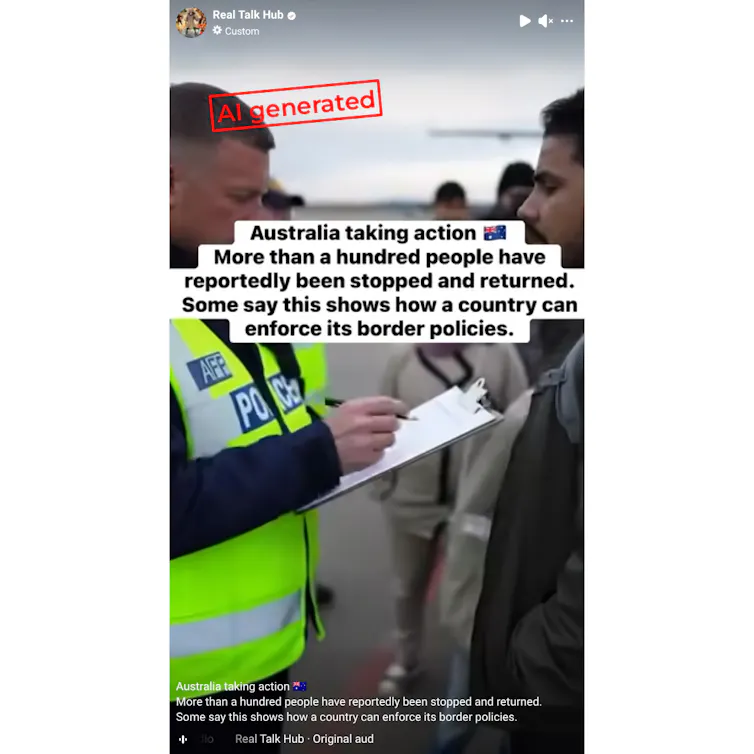

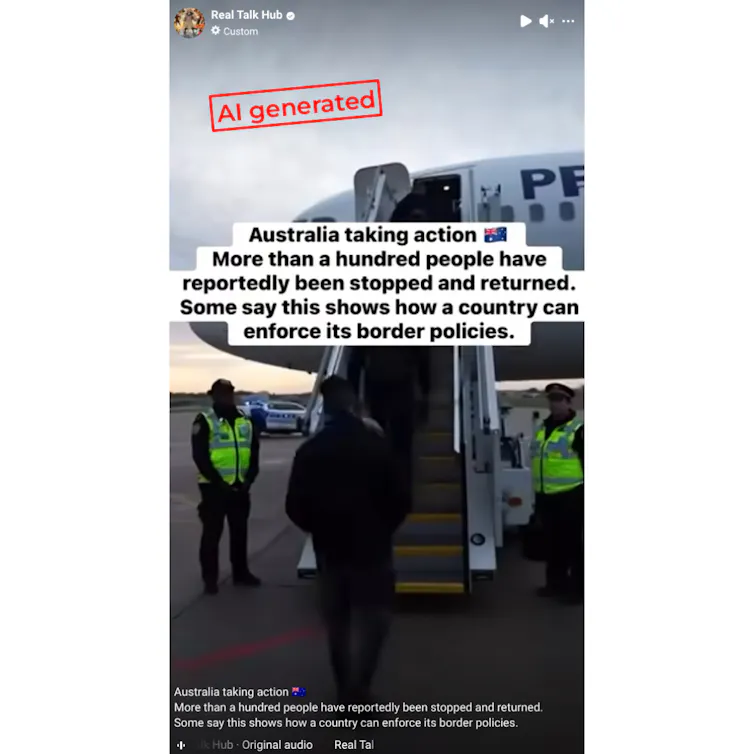

Global society makes billions of images and uploads hundreds of thousands of hours of video on the internet every day. The problem is, some of this content is misleading or downright wrong. And when it’s in visual form, it can be particularly convincing. Take the Met Gala that happened earlier this month in New York. While photographers snapped photos of Rhianna, Beyoncé and Nicole Kidman as they strutted their stuff, others saw “photos” of celebrities, such as Rosalía, Lady Gaga and Jacob Elordi, who were actually elsewhere (the images in the below Instagram carousel are AI generated). While this type of AI slop might seem harmless and can be easily verified, other “media fakery” is becoming far more problematic and demands more robust techniques to verify. Traditional verification techniques are falling short as AI becomes increasingly convincing and the line between authentic and synthetic blurs. This is true across all content, from still images to moving ones and audio deepfakes. The volume of content and the speed at which it travels doesn’t help. It also doesn’t help that fact-checking can take hours or days while fakes can be created in seconds. First, equip yourselfGuides on detecting AI-generated content suggest multiple strategies and acknowledge there are no perfect solutions. But there are helpful things you can do. Familiarise yourself with examples of fakes and study how they were fact-checked. This helps you understand what is possible and learn how fact-checkers sort real from fake. Look deeply. Zoom in. Pause the content or watch it frame-by-frame. Inspect the small details. Look out for inconsistencies, textures that are flat when they shouldn’t be, or patterns that are too perfect or are inexplicably off. Does the location shown match with where the scene is purported to be? Do shadows fall naturally and do lines follow the rules of perspective? Look widely. Are you familiar with the source? What else does it publish and how long has it been around? What do other trusted sources say? How does this depiction compare to others that are available? Or if there aren’t others available, should that give you pause? Then, apply your learningsLet’s take an example and work through it together. This Facebook reel, posted by an account called “Real Talk Hub”, purports to show migrants being stopped and returned by Australian police at an airport. Before getting too granular, let’s take stock of the opening image.

The video uses scale to show what appears to be a long stream of passengers. Some are moving toward and some are moving away from a plane. It is difficult to identify specifics in the video. The superimposed text blocks almost all of the horizon line. Shallow depth of field makes aspects in the distance blurry and hard to discern.

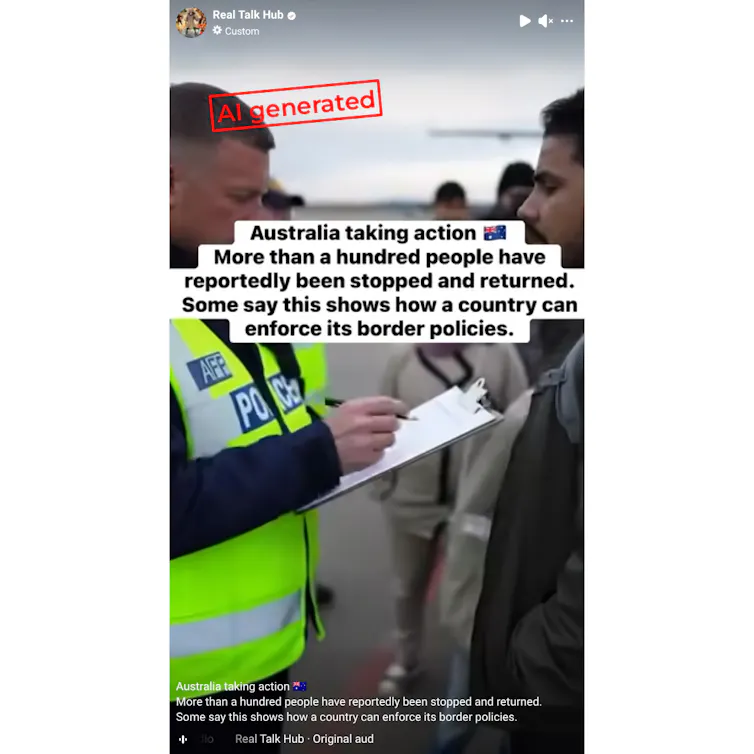

Many of the passengers have darker skin and are visually coded as “other”. They interact with a light-skinned police officer who takes notes on a clipboard. The vertical video is framed carefully to not reveal identifiers like the name of the airline that seems to start with the letter “P”. This makes it difficult to search the airline’s name and whether credible sources corroborate the story that’s told.

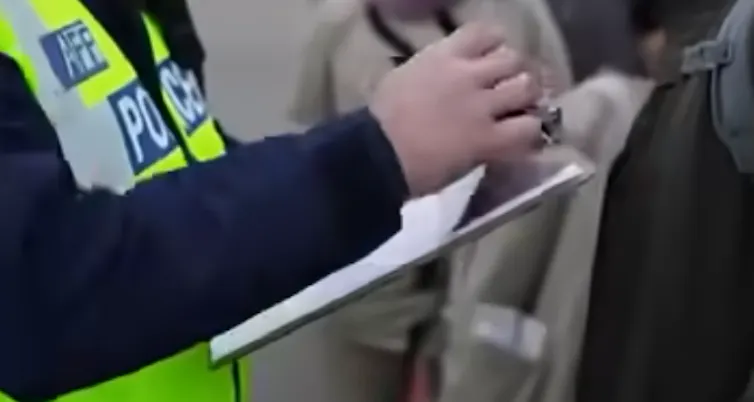

Even though the people and scenes look realistic at first glance, the video’s integrity unravels when we slow down and look closer. People in the passenger line morph and transform. The officer is able to single-handedly remove the paper from the clipboard and it appears to inexplicably leave white strips behind. The police vests look different to images you can find in verified media photos of the Australian Federal Police. Taken together, all these clues suggest the video is AI-generated.  The paper on the clipboard moves in an unrealistic way, and the police vest is not accurate. Real Talk Hub/Facebook The paper on the clipboard moves in an unrealistic way, and the police vest is not accurate. Real Talk Hub/FacebookThink like a fact-checkerMany AI-generated videos can trick you and create a very compelling narrative. So, fact-checkers have developed triangulated methodologies that examine elements beyond just what you see in the video. One way to do this is to systematically check contextual factors – the other things surrounding the content. Our team’s research has found professional fact-checkers usually pay attention to the type of social media accounts or websites distributing suspicious media. For this AAP verification on a video about banning dogs on the beach, it was crucial to inspect the user’s activity and posting patterns. In addition to visual anomalies, the fact-checkers also found an invisible watermark that helped them determine the content was AI-generated. Other things to check are how long a social media account has been operating, how often the social media account posts, and whether the account is transparent about its use of AI. These aren’t fool-proof indicators of authenticity, though. The migrant example above comes from an account that is about five years old. It also comes from a “verified” account, which might make it feel more credible. But both Facebook and X now let users pay for this verification. Overall, when it comes to suspect images or video, don’t just look deeply. Also look widely. AI-generated content can increasingly fool our eyes, so you also have to look beyond what’s in the video. Taking a mixed-methods approach that considers visual and contextual clues can help. By training your ability to think like a fact-checker, you can stay safer online. Silvia Montaña-Niño, Lecturer, Centre for Advancing Journalism, The University of Melbourne and T.J. Thomson, Associate Professor of Visual Communication & Digital Media, RMIT University This article is republished from The Conversation under a Creative Commons license. Read the original article. |

Securing the Digital Lifelines of Global Connectivity (2026-06-05T12:11:00+05:30)

A U.S. Navy utilitiesman performs maintenance on subsea cables at the Pacific Missile Range Facility in Hawaii. Subsea cables are essential to global connectivity, economic exchange, and strategic coordination, and militarily, they are indispensable for national security, logistics, and coalition operations. (U.S. Navy photo by Builder 2nc Class Ben Reed/Released) Public Space 13th April 2026 As subsea cables power the modern digital world, the United States is working with partners including India to secure these vital networks and strengthen connectivity across the Indo-Pacific. Giriraj Agarwal SPAN Magazine, U.S. Embassy New Delhi Beneath the vast waters of the Indo-Pacific lies a largely unseen but indispensable network powering the modern world. Subsea cables, often overlooked in public discourse, are essential to global connectivity, economic exchange, and strategic coordination. “Subsea cables carry over 95 percent of international internet traffic, supporting everything from cross-border payments to cloud and AI services,” says an economic officer at U.S. Embassy New Delhi. “Because so many routes run through Indo-Pacific chokepoints, any cut or compromise there can send shockwaves through the global economy.” This is why securing subsea cables has become a frontline strategic issue. For the United States and the many countries they connect, these cables are economic lifelines. “Asia-focused routes support a vast share of U.S. digital trade and services exports, and one recent estimate suggests that connectivity with the region via subsea systems adds on the order of $169 billion a year to the U.S. economy,” the officer says. “They are also the critical backbone of the AI and cloud era,” she adds, pointing to investments by major U.S. technology firms in trans-Pacific and regional cable systems to enhance speed, security, and resilience. The strategic dimension is equally significant. “Militarily, the same fiber-optic networks are indispensable to the United States for national security, logistics, and coalition operations,” she says. “We need to protect them from physical and technical threats because these cable networks hold a real impact on how power is exercised in the Indo-Pacific.” Building secure networks In response, U.S. policy is increasingly focused on strengthening subsea cable infrastructure and shaping how these networks are built and secured. The Department of State’s CABLES Program, the officer explains, “aims to shape where and how new cables are built, who supplies them, and what security standards they follow.” Through partnerships with governments and industry, the program promotes best practices, route diversification, and safeguards against risks like sabotage, surveillance, and overreliance on untrusted vendors. Complementing these efforts, the Quad Partnership for Cable Connectivity and Resilience, linking the United States, India, Japan, and Australia, seeks to expand secure connectivity and strengthen network resilience across the region. “These efforts back trusted suppliers, push for high infrastructure standards, and encourage new cable routes that avoid single points of failure,” says the officer. Partnering for digital resilience India is emerging as an important partner in this evolving landscape. “India’s digital consumption is booming with 36 GB per user per month which is amongst the highest globally,” she notes, highlighting its nearly one billion internet users and rapidly expanding data infrastructure. Its geographic position further enhances its importance, placing it “at the crossroads of Asia, the Middle East, Africa, and Europe, astride key subsea routes.” U.S. companies are playing a central role in this transformation. “U.S. hyperscalers remain central to India’s digital ecosystem,” she says, pointing to new investments that are reshaping both India’s and the region’s connectivity by diversifying landing points. Recent projects in cities like Visakhapatnam and Mumbai are expected to broaden India’s subsea gateways, strengthen resilience, and support the AI-scale data and cloud capacity the country increasingly needs. “Beyond the infrastructure itself,” says the officer, “U.S.-India collaboration can also shape regional norms by championing transparent procurement, trusted suppliers, and high standards for secure cable development.” Risks beneath the surface Despite their critical role, subsea cables face a range of vulnerabilities. “The most common threat to subsea cables is surprisingly mundane: accidental human activity,” she observes, noting that fishing trawlers and ship anchors account for most of the roughly 150 cable cuts reported annually. Natural disasters like earthquakes and underwater landslides further compound the risks. Yet the growing concern lies in deliberate disruption. “In a crisis, hostile actors could sabotage cables to cripple communications and economic activity,” she says. Technical vulnerabilities also persist, as cables can be targeted for surveillance, raising concerns about espionage and data interception. Structural weaknesses like limited route redundancy can further increase vulnerability by concentrating traffic along a small number of pathways. “When a cable is cut, repairs can take days or even weeks depending on location, permits, and weather,” the officer explains. “In contested regions such as the South China Sea or the Taiwan Strait, cable disruptions could slow connectivity, interrupt financial flows, complicate military communications, and affect economies that depend heavily on digital trade.” Insights from the Wavelength Forum The July 2025 Wavelength Forum in New Delhi underscored the urgency of action. “A key takeaway is that subsea cables must be treated as frontline strategic infrastructure,” she says. Participants called for regulatory reforms, including a streamlined single-window clearance system in India so installation and repairs can proceed quickly. They also emphasized the importance of diversifying landing stations beyond a few coastal cities and strengthening inland connectivity to reduce systemic vulnerabilities. Another critical gap identified was repair readiness in India. “Vessels often take too long to reach fault sites, prolonging outages and raising economic and security risks,” the officer notes. Overall, the forum reinforced that cable protection is inseparable from economic security, digital resilience, and geopolitical stability. Industry leaders at the forum agreed that, looking ahead, India’s priorities should include streamlining approvals for cable installation and enabling rapid emergency repairs so that faults can be resolved quickly. Above all, international cooperation is essential. “Stronger international cooperation, particularly between the United States and India, can promote trusted suppliers, better information-sharing, and common standards for secure cable development across the Indo-Pacific.” Securing the Digital Lifelines of Global Connectivity | MorungExpress | morungexpress.com |

Women spend nearly 50 pc more time than men on digital platforms: Report (2026-06-03T13:15:00+05:30)

(AI Image/IANS) ew Delhi, (IANS) Women users are the strongest drivers of digital engagement in urban India, spending more time than men across categories such as entertainment, messaging and e-commerce or quick commerce, a report said on Monday. The joint report from consumer behaviour analytics platform VTION and the Internet and Mobile Association of India (IAMAI) found that women spent more time than men across most categories, with the sharpest differences visible in commerce platforms. It highlighted that women spend an average of 82.4 minutes per day on entertainment-related content. Women aged between 25 and 34 peaked in this category with 86.3 minutes of consumption per day. In the e-commerce or quick commerce category, the 25-34 urban female cohort in megacities averaged 35.2 minutes per day compared to 24.8 minutes for urban male users, reflecting a 42 per cent higher engagement level. AI apps are witnessing rapid adoption as a new daily digital habit in urban India, with the category recording over 100 per cent growth during April 2025-March 2026 and urban users spent an average of 11.3 minutes per day on AI applications. AI application usage is currently concentrated among 18–34-year-olds and higher-income urban households. Conversational AI is increasingly influencing how users discover brands and products online, with consumers turning to AI tools before opening traditional search or e-commerce platforms, the report noted. Young, mass-market urban India of users aged 18–24 drives social media, averaging 120 minutes per day of usage compared to the category of overall 97.9 minutes per day. Urban consumers aged above 35 anchored the entertainment category, consuming content for 77-78 minutes per day. Payment app engagement patterns remain largely consistent across income groups, with urban users across higher, middle and lower-income urban household categories.The report analysed data from over 1 lakh consented smartphones representing over 407 million urban Indians. Women spend nearly 50 pc more time than men on digital platforms: Report | MorungExpress | morungexpress.com |

What is a ‘digital detox’ and will it make me healthier? (2026-05-28T12:51:00+05:30)

Joanne Orlando, Western Sydney UniversityAre you surrounded by screens? Today, we rely on technology to do everything from sending emails to ordering food. But being constantly connected can leave us physically and mentally exhausted. That’s why some people are doing “digital detoxes”, the practice of staying away from devices and social media for a set period of time. The concept is gaining traction online, with supporters spruiking the health benefits of the “analogue lifestyle”. Some are even paying big bucks to go on “digital retreats”, with the aim of becoming healthier and happier. But do digital detoxes actually work, or are they just another wellness trend? What is a ‘digital detox’?The term “digital detox” stems from detoxification, the process of safely getting a person off an addictive substance such as alcohol or drugs. This is usually done with support from a health-care professional. So the idea of a digital detox is to step away from technology, to instead experience life with fewer distractions and foster relationships offline. The trouble with techOn average, young people in Australia look at screens for nine hours a day. Research suggests adults aren’t much better, with Australians aged between 45 and 64 spending up to six hours each day on screens. As a result, more people are experiencing information overload, the idea of being physically and emotionally overwhelmed by an immense amount of data. A related concept is social media fatigue, a consequence of being constantly connected through online platforms. But there are signs people are resisting the pull of technology. Some younger people are swapping screens for hands-on hobbies such as knitting, and joining chess clubs and other offline social activities. They are also driving trends such as “raw-dogging boredom”, the practice of sitting through long haul flights without headphones. And friction-maxxing, the idea you can become a better, more resilient person by doing tasks that involve some level of difficulty, is also gaining traction online. So in a sense, digital detoxes are just the latest online trend. Do ‘digital detoxes’ work?Current research suggests digital detoxes may have some benefits. But the evidence is far from conclusive. One 2025 meta-analysis examined 20 randomised controlled trials, all looking at the effects of social media detoxes. It found taking a short break from social media had a small but positive effect on people’s feelings of life satisfaction and self-esteem. Participants also reported feeling less anxious, depressed and lonely. In another 2025 study, researchers blocked participants’ smartphones so they could only receive calls and texts, over a two-week period. The results were striking. The researchers found this intervention had a greater positive effect on participants’ mental health than antidepressants. Importantly, this was because participants spent less time on their phones, but also spent this time doing beneficial activities such as socialising in person, exercising and being in nature. Not for everyoneDigital detoxes may impact people differently, due to various factors. One is cultural context. Research suggests people using social media in collectivist cultures such as Turkey may experience more social pressure to respond quickly and maintain extensive networks, compared to those in more individualistic societies. So people in collectivist cultures may benefit more from taking a break from social media. Another is gender. Research suggests women mainly use social media to maintain relationships, and that they compare their physical appearance to others. This means they may benefit more from a digital detox, compared to men. One 2020 study found women who took a one-week break from Instagram felt significantly more satisfied with their life than women who stayed on it. However, the researchers did not see the same effect in men. All about the approachCurrent research suggests doing a digital detox may improve your mental health. But the way you approach it matters. You shouldn’t just go cold turkey on technology. That’s because you’re less likely to sustain that change. One 2023 study found people who reduced their daily smartphone use by one hour experienced stronger and more lasting mental health benefits, compared to those who quit entirely. Here are some tips to make your digital detox last:

It’s hard to stay present and connected in our increasingly digital world. But doing a digital detox could help. Importantly, the aim is not to eliminate technology from your life, but to use it in a more conscious, deliberate way. Joanne Orlando, Researcher, Digital Wellbeing, Western Sydney University This article is republished from The Conversation under a Creative Commons license. Read the original article. |

US NRC approves Limerick digital retrofit project (2026-05-05T13:32:00+05:30)

(Image: Constellation) (Image: Constellation)The US Nuclear Regulatory Commission has approved what is described as a first-of-a-kind project to replace analogue instrumentation and control equipment with digital systems. The Limerick Clean Energy Center in Pennsylvania in the USA, is a nuclear power plant featuring two boiling water reactors with a combined capacity of 2,317 MW. The first unit entered commercial service in 1986 and the second unit in 1990. They are currently licensed to operate until 2044 and 2049, respectively. Constellation says the USD167 million Digital Modernization Project will "enhance reliability, diagnostic capability and cyber resilience" and "improve equipment monitoring, provide a broader range of automation and support additional operational flexibility with enhanced reliability". It will be the first large-scale upgrade to a digital safety system at an operating nuclear plant in the US, supported by the US Department of Energy's Light Water Reactor Sustainability Program. The installation of the new system will take place in phases, and digital control rooms will be installed during refuelling outages. The Nuclear Regulatory Commission said in its announcement: "As the nuclear industry advances toward next-generation power reactors equipped with state-of-the-art digital instrumentation and control (I&C) systems, much of today’s operating fleet still relies on analogue controls. While reactor operators have leveraged existing regulatory flexibilities to implement targeted digital upgrades, the NRC’s approval of the Limerick amendments represents a broader and more comprehensive approach. Limerick will be the first operating nuclear power plant the NRC has authorised to perform a major digital retrofit that replaces multiple analogue safety systems with a single digital plant protection system. "The change modernises the control room by replacing legacy equipment with modern digital controls and displays." It said that the approval of licence amendments for Limerick's modernisation project paves the way for future digital I&C modernisation at other US nuclear power plants. Assistant Secretary for Nuclear Energy Ted Garrish said: "Upgrading nuclear power plants with advanced digital systems will help ensure that Americans continue to have access to affordable and abundant energy today and in the future." Joe Dominguez, President and CEO of Constellation, said: "Every dollar we invest to enhance and modernise the nation's largest nuclear fleet will pay dividends for American families and businesses by creating jobs, keeping costs down, improving reliability and adding much-needed capacity to fuel economic growth."Baltimore-based Constellation operates 14 nuclear power plants in the USA with a combined generating capacity of more than 19,000 MWe. These are: Braidwood, Byron, Calvert Cliffs, Clinton, Dresden, FitzPatrick, LaSalle, Limerick, Nine Mile Point, Peach Bottom, Quad Cities, R E Ginna, Salem and South Texas Project. US NRC approves Limerick digital retrofit project: |

India needs 3.2 million green‑skilled workers by 2030: Report (2026-04-23T15:04:00+05:30)

(AI image/IANS) (AI image/IANS)Mumbai, (IANS) Nearly 45 per cent of core skills are projected to change by 2030 and India will need 3.2 million additional green‑skilled workers by then, a report said on Wednesday. The report from KPMG in India and the Confederation of Indian Industry (CII) said the current MSME talent landscape is characterised by fragmented skill levels, limited formal skilling exposure, and varying degrees of digital readiness. Only about 10 per cent of the MSME workforce have formal vocational training and nearly 69 per cent of MSMEs struggle to source skilled talent, the report said. The report outlined how India’s MSMEs can build digitally fluent, AI‑enabled and green‑ready talent to remain competitive. India’s MSME sector employs 32.84 crore people and contributes 30.1 per cent of GDP and is at a defining moment where talent will determine long‑term resilience and growth, it added. Each MSME worker delivers only 14 per cent of the productivity of a large‑enterprise worker, pointing out huge room for growth in India's MSME sector. The future of MSME work will be shaped by the twin transition and AI adoption, moving work from manual tasks to human–machine collaboration. The report outlined six strategic imperatives such as building an AI‑ready workforce, apprenticeships aligned with digital tools, skills‑first hiring, ONDC‑led digital market access, cluster‑based skill ecosystems, and inclusive talent practices driving transition readiness. “AI will change how MSMEs operate, but skills will determine whether it creates advantage or risk. The twin transition demands a digitally fluent, sustainability‑aware workforce ready to innovate at speed” said Sunit Sinha, Partner and Head, Human Capital Advisory, KPMG in India.Naveen Aggarwal, Office Managing Partner, Delhi-NCR, KPMG in India called MSMEs the heartbeat of India’s economic ambition, adding that the next wave of growth will be led by those that invest in high‑value talent and governed capability. India needs 3.2 million green‑skilled workers by 2030: Report | MorungExpress | morungexpress.com |

Library is Rescuing Historical Treasures Trapped on Old Floppy Disks from the ‘Digital Dark Ages’ (2026-04-10T13:16:00+05:30)

Credit – Cambridge University Library Credit – Cambridge University LibraryCambridge University archivists are leading an important project to extract and conserve valuable information from floppy disks before they become unusable. The initiative began when the archive received a box of 5.25-inch floppy disks from a DOS-formatted computer that belonged to none other than physicist Steven Hawking, who was able to use early computers despite his disability from ALS. The challenges a group of archivists encountered when they attempted to read the disks helped them realize how vulnerable this funny, briefly adopted technology which predate compact disks is to the ravages of time, and how a clock was ticking to get important information off them before they became unusable. It spawned a project, aptly named in our current pop-culture environment: “Future Nostalgia.” Before the term was chased from the historical lexicon with torches and pitchforks, “the Dark Ages” were used to describe the period in European history when primary source writings are particularly scant—between the fall of Rome and the Middle Ages. The Future Nostalgia project presents the case that the late 20th century may form a sort of dark ages when historians in the future look back on our time and see a big hole in early computer writings. Certainly books and magazines and newspapers are available a-plenty, but if floppy disks and other early technologies aren’t kept in good order, early computer writings may seem sparce to future historians. Floppy disks present numerous challenges to archivists, among which were the multiple formats they were built and coded for. “There wasn’t one system that dominated the market,” explains Leontien Talboom, a member of the Cambridge University Library’s digital preservation team who is leading the project. That means that as many as a dozen different early computing systems are needed to read the full spectrum of floppy disk formats, and it’s not always straight forward finding these machines. Nor is it straightforward that the disks themselves are readable. They may be moldy, if stowed away in an attic for example. Iron oxide on the surface of the plastic may corrode material away. It can also lose its magnetism, preventing it being from read entirely. That is why Talboom and her team are urgently trying to acquire collections of noteworthy writers or authors—like Hawking—and further digitize them from their early floppy disk format. So far, in addition to Hawking, they’ve uncovered abstract lists by the poet Nicholas Moore, articles from a society of the paranormal, and more. “Most of the donations we get are from people who are either retiring or passing away,” Talboom told the BBC. “That means we’re seeing more and more things from the era of personal computing.” Not only are donations coming from those retired or passed, but so is a lot of information on how to use different formats. An example comes from the archivists’ work with a set of floppy disks that contained speeches and letters with constituents of Neil Kinnock, a UK labor party leader in the 1980s. “They were written on the Diamond Word processor,” explained Chris Knowles, a participant in the Future Nostalgia project.” There’s not much information about that system out there. There are lots of fan communities around any system that had games, and archivists often borrow their tools. But where that doesn’t exist, it’s more awkward.”Work continues, and Talboom is more and more eager to have the public’s involvement with the project. She sees it as a win-win partnership: owners of floppy disks get to see what kind of materials their old colleagues or family members wrote onto them, and Future Nostalgia gets more material, but also more knowledge and practice about how to access and preserve floppy disk formats and the material they contain. Library is Rescuing Historical Treasures Trapped on Old Floppy Disks from the ‘Digital Dark Ages |

An ‘AI afterlife’ is now a real option – but what becomes of your legal status? (2026-04-02T13:30:00+05:30)

|

Would you create an interactive “digital twin” of yourself that can communicate with loved ones after your death? Generative artificial intelligence (AI) has made it possible to seemingly resurrect the dead. So-called griefbots or deathbots – an AI-generated voice, video avatar or text-based chatbot trained on the data of a deceased person – proliferate in the booming digital afterlife industry, also known as grief tech. Deathbots are usually created by the bereaved, often as part of the grieving process. But there are also services that allow you to create a digital twin of yourself while you’re still alive. So why not create one for when you’re gone? As with any application of new technology, the idea of such digital immortality raises many legal questions – and most of them don’t have a clear answer. Your AI afterlifeTo create an AI digital twin of yourself, you can sign up for a service that provides this feature, and answer a series of questions to provide data about who you are. You also record stories, memories and thoughts in your own voice. You might also upload your visual likeness in the form of images or video. The AI software then creates a digital replica based on that training data. After you die and the company is notified of your death, your loved ones can interact with your digital twin. But in doing this, you’re also delegating agency to a company to create a digital AI simulation of yourself after death. From the get go, this is different to using AI to “resurrect” a dead person who can’t consent to this. Instead, a living person is essentially licensing data about themselves to an AI afterlife company before they’ve died. They’re engaging in a deliberate, contractual creation of AI-generated data for posthumous use. However, there are many unanswered questions. What about copyright? What about your privacy?. What happens if the technology becomes outdated or the business closes? Does the data get sold on? Does the digital twin also “die”, and what effect does this have for a second time on the bereaved? What does the law say?Currently, Australian law doesn’t protect a person’s identity, voice, presence, values or personality as such. In contrast to the United States, Australians don’t have a general publicity or personality right. This means, for an Australian citizen, there’s currently no legal right for you to own or control your identity – the use of your voice, image or likeness. In short, the law doesn’t recognise a proprietary right in most of the unique things that make you “you”. Under copyright law, the concept of your presence or self is abstract, much like an idea is. Copyright doesn’t offer protection for “your presence” or “the self” as such. That’s because there has to be material form in specific categories of works for copyright to exist: these are tangible things, such as books or photos. However, typed responses or the voice recordings submitted to the AI for training are material. This means the data used to train the AI to create your digital twin would likely be protectable. But fully autonomous AI generated output is unlikely to have any copyright attached to it. Under current Australian law, it would likely be considered authorless because it didn’t originate from the “independent intellectual effort” of a human, but from a machine. Moral rights in copyright protect a creator’s reputation against false attribution and against derogatory treatment of their work. However, they wouldn’t apply to a digital twin. This is because moral rights attach to actual works created by a human author, not any AI-generated output. So where does that leave your digital twin? Although it’s unlikely copyright applies to AI-generated output, in their terms and conditions companies may assert ownership of the AI-generated data, users may be granted rights in outputs, or the company may reserve extensive reuse rights. It’s something to look out for. There are ethical risks, tooUsing AI to make digital copies of people – living or dead – also raises ethical risks. For example, even though the training data for your digital twin might be locked upon your death, others will be accessing it in the future by interacting with it. What happens if the technology misrepresents the deceased person’s morals and ethics? As AI is usually probabilistic and based on algorithms, there may be risk of creep or distortion, where the responses drift over time. The deathbot could lose its resemblance to the original person. It’s not clear what recourse the bereaved may have if this happens. AI-enabled deathbots and digital twins can help people grieve, but the effects so far are largely anecdotal – more study is needed. At the same time, there’s potential for bereaved relatives to form a dependence on the AI version of their loved one, rather than processing their grief in a healthier way. If the outputs of AI-powered grief tech cause distress, how can this be managed, and who will be held responsible? The current state of the law clearly shows more regulation is needed in this burgeoning grief tech industry. Even if you consent to the use of your data for an AI digital twin after you die, it’s difficult to anticipate new technologies changing how your data is used in the future. For now, it’s important to always read the terms and conditions if you decide to create a digital afterlife for yourself. After all, you are bound by the contract you sign. Wellett Potter, Senior Lecturer in Law, University of New England This article is republished from The Conversation under a Creative Commons license. Read the original article. |

The fading line between the digital world and reality (2026-04-02T13:29:00+05:30)

Photo Courtesy: Image by Gerd Altmann from Pixabay | For representational purpose only Tashvi Aneja, First Year BTech Student, Plaksha University: Scrolling aimlessly, swiping subconsciously, and clicking with every blink - a revolutionized digital world now surrounds us so densely that our ambitions, fears and identities are governed as much by code and pixels as they are by physical experiences. Picture this: a world where you can no longer tell whether a memory or conversation was real; where you're not sure if a face or friendship is simply Artificial Intelligence, where the digital version of yourself feels more admired and authentic than your real reflection. This is not fiction. The boundary between the digital world and reality is fading rapidly. The old saying 'seeing is believing' no longer holds. Many of us already live in overlapping universes, where the digital world does not just co-exist but might be overshadowing our physical world. AI has rapidly become an uncredited co-author across text, images, video, and web content, generating highly personalized work at scale. Though it recombines existing data rather than creating truly original ideas, it produces convincing authenticity. Automated Insights alone generated over one billion algorithmic stories in 2014, and today many top search results contain AI-written text. As readers struggle to distinguish human from machine output and detection tools remain unreliable, questions of trust, credibility, and authorship grow more urgent in a world where digital and human creation increasingly blur. Human decisions stem from past experiences and knowledge, which leave digital footprints that algorithms capture as data proxies to influence and engineer what we see, feel, and do, creating an echo chamber that reinforces existing views and blocks alternative ideas, all disguised as personalization. Our social media feeds, news suggestions, and video recommendations are cleverly curated by opaque algorithmic systems which push filtered narratives. This biased content online often grabs users' attention and molds perceptions without anyone questioning the complete picture. We believe this to be real and factual without realizing that our remote is in the hands of a system, a system which has become our master. Earlier youth identities were built internally; now they are externally anchored. Youth identity crisis is becoming increasingly prevalent, with most of it shaped by a digital ecosystem where their sense of self is constantly split across platforms. A sheer number of digital spaces creates feelings of self-fragmentation and a lack of temporal narrative coherence, making it hard for adolescents to form a stable identity. Their focus on what gets them attention instead of what truly reflects them, causes constant shape shifting to please others or fit algorithmic trends. They end up managing multiple personas and split their online and offline selves. As they attempt to pull together the scattered pieces of themselves across several platforms and media, they struggle to understand and define their true selves, contributing to imposter syndrome amongst individuals, particularly youth. Imposter syndrome is also magnified by algorithms for content creators. Creators may feel that their success is a byproduct of virality or algorithmic luck, not genuine talent. They ask themselves, "Am I good, or am I just performing for the algorithm?" They often feel the pressure to stay relevant and present an ever-sharpened version of themselves, even if it may not be their authentic selves. Focusing engagement to please others masks their real identity with their digital persona. Additionally, with the growing use of AI, authenticity comes into question for content creators. Beyond imposter syndrome, increasing online interactions raise other mental health concerns, too. FOMO (Fear of Missing Out) is not new, but over-reliance on digital world super charges it. A study found that exposure to idealised athletic images reduced self-esteem in 37% of participants, particularly young women who frequently compare themselves to influencers. Constantly scrolling through curated highlights makes ordinary life feel inadequate, ignoring that social media mostly showcases highs, not lows. With AR filters and deepfakes amplifying perfection, comparison intensifies. Profile curation, unrealistic standards, and the pursuit of validation tie self-worth to likes and followers rather than real achievements. This fuels anxiety, depression, sleep disorders, and a deep erosion of self-confidence. Moreover, since a large chunk of our thinking happens in digital context, neural pathways adapt and optimize information processing, decision making, and problem solving for digital media. While some argue that it improves multitasking, it also declines focus and inhibits deep and critical thinking. Eventually, our real life begins to echo the digital one, and vice versa. The real world is no longer just mirroring the digital world, it is amplifying the crisis of identity and algorithmic control. Chatbots and algorithms reflect our interests and behaviours back to us, creating feedback loops where online trends begin shaping offline actions. Search for one product and you're soon surrounded by ads and recommendations. Over time, this repetition does more than guide behaviour, it shapes perception, making digital cues feel more real than lived experience. As dependency grows, the line between perception and presence blurs, and offline life begins to mirror digital patterns. Our digital footprint feeds predictive systems, shifting thought from self-directed to system-conditioned. Immersive technologies like VR intensify this effect, as the brain records virtual input as real, sometimes creating altered or false memories. Reality is no longer binary but a spectrum, where people increasingly live, choose, and connect within hybrid digital-physical spaces. As more interactions happen online through screens, filters, and bots, trust weakens. We often can't tell real from fake. Is it edited or genuine? We miss tone and body language, so misunderstandings grow. In virtual friendships or relationships, it's hard to know what's truly genuine, making trust feel uncertain. With the rise of fake and synthesised content, we are forced to question what is real anymore. Hyper-realistic videos, AI-generated conversations, and filtered identities blur authenticity to the point where trust feels fragile. Suspicion seeps into everything, memories, friendships, even our own perceptions. We now look at a striking photograph or a beautifully written essay and assume it must be AI-made. Instead of appreciating genuine talent, we question its authenticity. This raises a chilling possibility: are we losing the ability to recognise what is real, or worse, to trust ourselves? Individuals should audit their digital footprint, limit screen time, prioritise real connections, and stay mindful of how algorithms shape perception. Educators must strengthen digital literacy and teach students to spot deepfakes and cope healthily. Creators should build trust through authenticity and transparency about AI use. Parents need to model balanced tech habits and foster safe spaces at home. Tech companies must focus on ethical design and user control, while policymakers enforce safeguards for fairness, safety, accountability, and youth protection.Today, we stand at a crossroads between a dazzling hyperconnected digital world and timeless richness of physical reality. The danger isn't simply choosing one overtaking the other, but it's forgetting the value of both. Instead of letting the digital realm shape our entire lives, we should simply let it amplify our humanity. No algorithm, no VR system, no AI-generated masterpiece can truly replace the real - real conversation, real touch, real us. The future belongs to those who master their minds, not their feeds. Technology should widen our perception, not shrink our sense of self. The fading line between the digital world and reality | MorungExpress | morungexpress.com |

Indian banks benefit from AI‑driven operating models: Report (2026-03-20T11:30:00+05:30)

(AI image/IANS) New Delhi, (IANS) Indian banks are benefiting from sustained credit growth, deeper digital public infrastructure and rapid adoption of AI‑driven operating models, and heightened regulatory focus on climate risk, cyber resilience and governance, a report said on Friday. The report from KPMG International said the sector is at par with global peers scaling from pilots to enterprise AI use, investing in workforce reskilling and strengthening cybersecurity and ESG frameworks to support long‑term resilience. Based on a survey of 110 global Banking and Capital Markets CEOs, the report found 83 per cent are confident about growth over the next three years and 65 per cent ranked AI as a top investment priority. Around 70 per cent CEOs said they plan to allocate 10–20 per cent of next‑12‑month budgets to AI, while 59 per cent expect agentic AI to have a transformational impact and 69 per cent expect returns within one to three years. "Around 83 per cent banking and capital market CEOs are prioritising reskilling for AI; 79 per cent say AI has redefined entry‑level skills whereas 78 per cent warn AI workforce readiness could negatively impact the organisation if not addressed," the report said. “As global banking leaders respond to rising operational and regulatory costs by pursuing scale and strategic M&A, the same imperative is increasingly shaping the Indian banking sector,” said Sanjay Doshi, Partner and Head, Transaction Services and Financial Services Advisory, KPMG in India. Doshi said that scale is more than just size for India but a catalyst for expanding distribution, accelerating digital transformation and enhancing cost efficiency. As banks deepen their investments in technology and modernize their operating models, selective consolidation and partnership‑led growth can unlock new markets, strengthen value propositions and build long‑term competitive resilience, he added.Around 86 per cent CEOs cited cyber insecurity as the top growth threat, 56 per cent cited ethical challenges, and 55 per cent pointed to data readiness and regulatory gaps. Indian banks benefit from AI‑driven operating models: Report | MorungExpress | morungexpress.com |